Introduction to Modern Asset Health Monitoring

The industrial landscape is undergoing a profound and accelerating paradigm shift, transitioning from reactive, run-to-failure maintenance models to highly sophisticated predictive maintenance (PdM) frameworks. At the core of this evolution is the imperative to maximize the P-F (Potential to Failure) interval, a conceptual curve representing the temporal gap between the detectable onset of a machine fault and its ultimate functional failure. Expanding this interval allows industrial facilities to transition from costly emergency repairs to planned, strategic interventions, thereby mitigating unscheduled downtime, optimizing labor allocation, and substantially extending the lifecycle of critical assets.

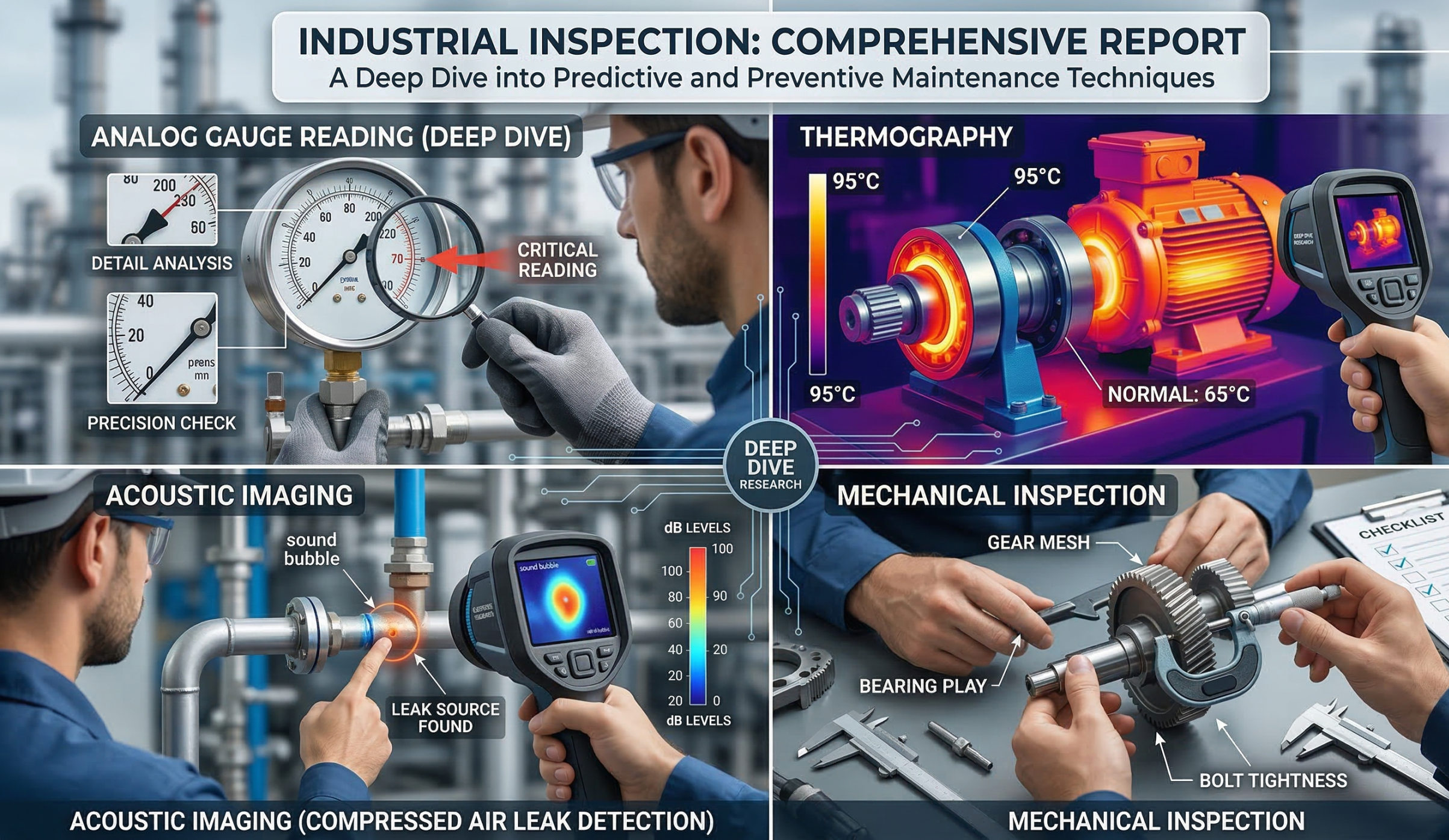

Predictive maintenance relies heavily on the continuous collection, processing, and analysis of operational data through non-destructive testing (NDT) methodologies and condition monitoring technologies. The contemporary PdM ecosystem integrates multiple discrete modalities, most notably visual intelligence (computer vision for analog gauge digitization), infrared thermography, acoustic imaging (ultrasound), and mechanical vibration analysis. By leveraging advanced sensor networks, Edge Artificial Intelligence (AI), and centralized digital twin architectures, organizations can achieve a holistic, real-time assessment of asset health across global operations. This exhaustive report provides a rigorous technical analysis of these critical condition-monitoring technologies, exploring their theoretical foundations, algorithmic implementations, operational limitations, and the stringent international standards governing their application in the field.

Artificial Intelligence in Analog Gauge Digitization

Despite the rapid proliferation of digital interfaces and Internet of Things (IoT) sensors inherent to Industry 4.0, a vast majority of industrial plants, utility infrastructure, and legacy manufacturing systems continue to rely on analog gauges. These critical mechanical instruments measure highly dynamic physical quantities such as internal pressure, localized temperature, flow rates, and fluid levels. Manual gauge monitoring introduces profound operational inefficiencies. It is exceptionally labor-intensive, frequently taking hours to complete a single inspection route in large facilities, and is inherently vulnerable to human transcription errors. Furthermore, operators are subject to cognitive biases or complacency during repetitive inspection rounds, often overlooking subtle deviations simply because they have grown accustomed to the routine visual state of the equipment. Infrequent manual readings also create critical “maintenance blind spots,” wherein severe pressure or thermal deviations occur entirely unnoticed between inspection intervals, potentially leading to catastrophic and dangerous failures. To systematically resolve this, automated gauge reading systems utilizing computer vision and deep learning have emerged as a foundational capability of modern industrial visual intelligence.

Computer Vision Pipelines and Algorithmic Frameworks

The accurate digitization of an analog gauge requires a highly robust, multi-stage computer vision pipeline capable of isolating the instrument, correcting geometric and perspective distortions, identifying scale boundaries, and calculating the precise trajectory of the indicator needle. The process invariably begins with image acquisition and preprocessing. When processing continuous video feeds, optical flow algorithms are frequently employed to stabilize the frame, mitigating the complex noise introduced by heavy machinery vibration or environmental motion. Subsequent image processing often utilizes specific color space transformations—most notably the Hue, Saturation, Value (HSV) model—combined with morphological operations (such as dilation and erosion) to isolate the primary dial region from highly complex, cluttered industrial backgrounds.

Once the gauge is isolated from the background, deep learning classification models are utilized to assess and correct the spatial orientation of the instrument. In highly dynamic environments, robotic crawlers, automated drones, or handheld mobile devices may capture images of gauges from completely arbitrary and unpredictable angles. State-of-the-art software implementations utilize lightweight convolutional neural networks (CNNs), such as the PyTorch MobileNet architecture, which are specifically trained to recognize and classify gauge rotations ranging continuously from to . The derived orientation classification value is subsequently fed into a deterministic orientation correction model, which computationally generates a rectified, upright image, ensuring that the zero-angle reference point is consistently positioned for accurate geometric analysis.

Following geometric rectification, the system must extract the semantic features of the gauge face. Advanced algorithms utilize Principal Component Analysis (PCA) to detect the distribution and orientation of individual scale marks. By calculating the primary eigenvector of each distinct scale mark, the system can determine the geometric center of the gauge by mathematically locating the intersection point of these derived vectors. Concurrently, Optical Character Recognition (OCR) engines scan the scene text to identify the numerical progression of the tick labels, while Hough Transform algorithms isolate the linear structural elements to accurately locate the indicator needle.

The final measurement calculation relies on fundamental trigonometry. By establishing a localized Cartesian coordinate system with the origin perfectly aligned at the calculated gauge center, the algorithm computes the angle of the pointer relative to the absolute minimum and maximum scale values. The digital readout is then determined through a precise linear interpolation of the calculated angle against the arithmetic progression extracted via the OCR engine. This final computation strictly accounts for the specific rotational convention of the gauge, adjusting the logic depending on whether the scale increments in a clockwise or counterclockwise direction.

Performance Benchmarks and Deep Learning Architectures

The operational efficacy of automated gauge reading relies heavily on the specific configuration of the underlying neural network architecture. Empirical studies rigorously comparing various programmatic approaches have demonstrated the profound superiority of deep residual learning models for this application. In an exhaustive evaluation utilizing a curated dataset of 1,848 industrial pressure gauge images (featuring a 0-10 BAR range), a hybrid approach combining the OpenCV library with the ResNet18 architecture achieved an exceptional accuracy rate of . This performance vastly outpaced alternative, highly popular models, with the VGG19 architecture reaching only accuracy and a traditional computer vision approach utilizing OpenCV with Scikit-Image achieving a mere .

The documented success of ResNet18 indicates that deep residual networks are highly adept at extracting the subtle, high-frequency spatial features required to accurately distinguish fine, metallic pointer lines from overlapping shadows, dirt, or dense scale marks. Furthermore, highly optimized algorithms boast relative reading errors of less than under standard, controlled conditions. Even when the surrounding environment becomes abnormal, introducing severe perspective distortion or fluctuating illumination, advanced scale-mark-based gauge reading (SGR) algorithms maintain average error rates below an impressive .

Optical Constraints: Parallax Error, Glare, and Environmental Variables

Despite these highly advanced algorithmic capabilities, computer vision systems continuously face significant optical and physical limitations. One of the most pervasive challenges in precision metrology is parallax error—a visual and cognitive distortion occurring when an analog gauge is viewed from an oblique angle, causing the pointer to appear misaligned with the underlying printed scale. While trained human operators can manually mitigate parallax by physically aligning their line of sight directly perpendicular to the dial, fixed camera systems or mobile robotic inspectors may not always possess the physical articulation required to achieve a perfectly orthogonal perspective.

Historically, to eliminate errors from parallax, high-quality analog meters incorporated a small mirror backing behind the dial face; if the operator or camera sees the reflection of the needle, the viewing angle is incorrect, providing a physical mechanism to guarantee measurement accuracy. Deep learning models must therefore be trained on extensive datasets featuring heavy perspective distortion to mathematically compensate for off-axis viewing geometries where physical correction is impossible.

Highly reflective surfaces present another critical impediment to visual intelligence. Glass or acrylic coverings on industrial gauges frequently produce intense glare, localized hotspots, and mirror-like reflections that can completely obscure the pointer or generate false structural artifacts that confuse line-detection algorithms. Reflective defects are notoriously inconsistent, often blending into the surrounding environment or becoming entirely invisible depending on the specific angle of incident light. Furthermore, small, transient variations in ambient lighting, atmospheric dust, equipment vibration, or internal condensation can severely degrade inspection accuracy. To counteract these variables, advanced imaging solutions deploy a combination of polarized filtering, diffuse artificial lighting arrays, and dynamic exposure bracketing to normalize the visual input prior to algorithmic processing.

The Environmental Footprint of Artificial Intelligence

As facilities scale the deployment of computer vision for predictive maintenance, the environmental and computational footprint of these AI models must be critically assessed. The meteoric rise of generative AI and deep learning has raised profound questions regarding energy consumption and greenhouse gas (GHG) emissions. Data centers, which facilitate the massive computational power required to train these complex visual models, generate massive amounts of carbon; in the United States alone, data centers generated million tonnes of equivalent in a single year, possessing a carbon intensity higher than the national average.

Comprehensive measurement frameworks assessing the environmental impact of AI—such as those evaluating Google’s Gemini models—track total energy per prompt, market-based emissions, and the extensive water consumption required for server cooling. Because training and serving models with billions of parameters requires exorbitant energy, industrial applications must prioritize “frugal AI.” This underscores the necessity of utilizing highly optimized, lightweight architectures like ResNet18 and PyTorch MobileNet that can be deployed directly on low-power edge devices (such as Raspberry Pi microcomputers) rather than relying on continuous, high-latency cloud computing. Processing visual data locally not only reduces the carbon footprint associated with data transmission but also ensures continuous operational capability even during severe network interruptions.

Infrared Thermography and Thermodynamic Diagnostics

Infrared thermography (IRT) is a highly mature, non-invasive, and non-contact diagnostic technique that dynamically visualizes the thermal energy emitted by physical assets. Operating on the fundamental physical principle that all objects with a temperature above absolute zero emit infrared radiation, thermographic cameras capture this invisible electromagnetic spectrum and translate it into a visual thermogram. Within these thermograms, predefined color palettes correspond to distinct temperature gradients, allowing operators to visualize heat flow. Because incipient equipment failures invariably alter the thermodynamic baseline of an asset—typically generating excess heat due to mechanical friction, electrical resistance, or impaired heat dissipation—IRT serves as a highly sensitive early warning system within the broader PdM framework.

Thermal Anomalies and Distinct Failure Modes

Thermographic analysis primarily focuses on identifying specific temperature anomalies, which manifest as stark deviations from the historical thermal baseline or the surrounding ambient environment. These anomalies are fundamentally categorized into distinct thermal signatures, each indicative of specific mechanical or electrical failure modes:

-

Electrical Resistance and Hotspots: In electrical distribution systems, increased resistance at terminal blocks, loose connections, or overloaded circuits restricts current flow. This physical restriction results in localized heating (Joule heating), creating a highly detectable hotspot. Thermography can vividly detect these high-resistance joints, degrading transformers, winding asymmetries, and failing insulation well before they precipitate dangerous arc flash hazards or catastrophic electrical fires.

-

Mechanical Friction and Wear: Rotating machinery—such as massive industrial motors, fluid pumps, and gas compressors—relies heavily on precision bearings to minimize kinetic friction. As lubrication degrades, becomes contaminated, or bearings succumb to physical wear and tear, the resulting kinetic friction generates substantial thermal energy. Infrared thermography reliably detects these abnormal heat patterns, as well as the uneven thermal signatures indicative of shaft misalignment, structural overloading, or eccentric rotation.

-

Process Equipment and Fluid System Anomalies: Beyond rotating and electrical equipment, IRT is extensively deployed to monitor the structural integrity of static process infrastructure. Cold spots or highly uneven temperature distributions across heat exchangers strongly suggest internal fouling, particulate blockages, or compromised heat transfer efficiency. Additionally, thermal imaging identifies degraded pipeline and vessel insulation, pinpointing the exact areas of energy loss and thermal inefficiency.

Standardization, Load Conditions, and Severity Criteria

The scientific interpretation of thermal data requires rigorous, unwavering adherence to standardized severity criteria. Temperature alone is frequently an unreliable and misleading indicator of absolute failure severity, as physical temperatures fluctuate dynamically with ambient environmental conditions, solar gain, thermal reflections, and operational loads. Consequently, certified thermographers do not rely solely on absolute temperatures; instead, they utilize the (Delta T) concept. represents the calculated temperature difference between the anomalous component and a strictly defined reference point, such as ambient air or an identically loaded, healthy component situated within the same environment.

The application of analysis is strictly governed by international standards such as the National Fire Protection Association (NFPA) 70B and the InterNational Electrical Testing Association (NETA) Maintenance Testing Specifications. NFPA 70B mandates routine, documented infrared inspections of energized electrical systems. It implicitly requires that the equipment utilized be capable of capturing and automatically calculating both on the physical device and within the post-processing reporting software. Because the temperature of an electrical connection varies synchronously and exponentially with load changes, NETA standards strongly recommend conducting inspections at a minimum of load, or at the highest normal operating load whenever possible, to ensure an accurate thermal representation. The quantitative findings are subsequently classified using standardized severity matrices—often color-coded Green, Yellow, Orange, and Red—which strictly dictate the temporal urgency of the required maintenance intervention based on the severity of the temperature rise.

Advancements in Automated Thermal Diagnostics

The integration of advanced Machine Learning (ML) with infrared thermography is revolutionizing thermal diagnostics, shifting the discipline from a purely manual exercise to an automated, intelligent process. Recent developments have introduced automated, hand-held thermal inspection systems utilizing advanced 3D object detection and pose estimation algorithms. By mathematically synthesizing thermal behavior and mitigating the limitations produced by image noise, these supervised ML models can classify discrete operating conditions and predict specific mechanical malfunctions with a remarkable accuracy, operating at an inference time of merely seconds. This deep integration of spatial awareness and thermal data significantly reduces the cognitive load on human inspectors and strictly standardizes the diagnostic process across complex, highly variable industrial environments.

Acoustic Imaging and Ultrasonic Analysis

While thermography detects the thermal byproducts of failure, acoustic imaging represents a critical evolution in condition monitoring by capturing the mechanical and fluid-dynamic sounds of degradation. This is particularly vital regarding energy conservation and fluid dynamics. Industrial compressed air systems—often referred to as the “fourth utility” alongside electricity, water, and gas—are notoriously inefficient and expensive to operate. The fundamental thermodynamic physics of compression dictate that approximately of the total lifecycle cost of an air compression system is consumed strictly by the electrical energy required to run it, with only a marginal attributed to initial capital installation. Undetected leaks in these massive pneumatic networks result in immense energy waste and correspondingly severe carbon emissions.

When pressurized air or gas escapes from a system network through a micro-fissure, it transitions rapidly from a stable laminar flow to a highly turbulent flow. This turbulence generates severe friction against the orifice, emitting high-frequency ultrasonic acoustic waves (typically above the human hearing threshold of ). While these frequencies are completely imperceptible to human hearing and are easily masked by the low-frequency, broadband audible noise of a heavy manufacturing environment, they are highly directional and easily detectable by specialized ultrasonic instrumentation.

The Physics of Acoustic Cameras and Beamforming Algorithms

Modern acoustic imaging relies on highly sophisticated acoustic cameras equipped with dense arrays of digital Micro-Electromechanical Systems (MEMS) microphones. A typical advanced device, such as the UltraCam LD 500/510, may utilize between 30 and 128 precisely arranged microphones. The physical, spatial arrangement of these sensors across the face of the camera is a highly critical design parameter. A wider spacing between the microphones significantly increases the spatial resolution of the device by amplifying the time-of-flight differentials between the sensors. Conversely, narrower arrangements are absolutely necessary to suppress spatial aliasing and false source generation, a common problem given the extremely short wavelengths inherent to high-frequency ultrasound.

To translate this raw, multi-channel ultrasonic data into a coherent visual map, acoustic cameras utilize complex far-field beamforming algorithms, predominantly variations of the “delay and sum” computational method. Because the acoustic wave emanating from a leak reaches each individual microphone on the array at a slightly different microsecond interval, the algorithm applies calculated mathematical delays to the signals from each channel to perfectly align them in the time domain. When these delayed signals are summed together, the acoustic emission originating from the specific target location is constructively amplified, while uncorrelated, ambient background noise is destructively interfered and severely attenuated. The processed ultrasonic data is then overlaid as a high-contrast heatmap—visually akin to a thermal image—directly onto a standard optical video feed, allowing technicians to instantly and intuitively pinpoint the exact mechanical origin of the leak.

Economic and Environmental Impact of Leak Detection

The absolute financial ramifications of compressed air leakage are staggering, making acoustic leak detection one of the fastest Return on Investment (ROI) initiatives in the entire predictive maintenance ecosystem. The volume of lost air scales directly with the operational pressure of the system and the geometric size of the leak orifice. The corresponding energy loss translates directly to elevated operational expenditures and unnecessary emissions.

The following structured data illustrates the potential annual financial and environmental losses associated with varying leakage rates, assuming a standardized system operating for hours annually at an energy cost of and a specific power requirement of :

By actively utilizing acoustic imaging to prioritize and immediately repair large-volume leaks—particularly at vulnerable connecting elements like flanges, threaded fittings, couplings, and pneumatic valves—facilities routinely achieve aggregate energy savings of to . This also aids significantly in fulfilling ISO 50001 energy management documentation requirements. Aggregated field statistics from platforms like LEAKReporter demonstrate that comprehensive leak management campaigns, surveying over 46,000 leaks, have identified calculated global losses exceeding €1.8 billion, generating proven savings in the tens of millions of euros.

Limitations: Acoustic Reflections and the EV NVH Paradigm

While undeniably highly effective, acoustic imaging possesses distinct physical limitations, particularly when deployed in complex, enclosed architectural environments. Indoors, acoustic reflections reverberating off concrete floors, curved metal tanks, and structural walls create phantom “ghost sources.” These multipath reflections severely interfere with the beamforming algorithm, generating persistent artifacts in the visual sound map that can easily mislead inexperienced technicians. Furthermore, the main lobe of the microphone array’s directivity pattern can introduce spectral leakage, causing intensely loud sound sources to completely mask weaker, adjacent acoustic anomalies, complicating the inspection of dense mechanical manifolds.

The application of acoustic imaging is also encountering entirely new paradigms and challenges in the automotive sector, specifically regarding Noise, Vibration, and Harshness (NVH) testing for the booming Electric Vehicle (EV) market. Traditional internal combustion engine (ICE) NVH testing focused heavily on the to low-frequency range, as the combustion events easily masked subtle mechanical noises. With the total removal of the ICE, the acoustic landscape of the vehicle shifts dramatically. EVs present a host of complex, high-frequency acoustic phenomena that were previously ignored:

| EV Noise Source | Typical Frequency Range | Acoustic Characteristics |

| Electric Motor Electromagnetic Noise |

Sharp tonal noise, varies linearly with operational speed |

|

| Inverter Switching Noise |

High-frequency hum, strictly related to Pulse-Width Modulation (PWM) frequency |

|

| Gear Meshing Noise |

Particularly prominent in high-torque single-speed reducers |

|

| Battery Charger Noise |

Near-ultrasonic range, residing at the absolute edge of human perception |

Because the low-frequency masking effect of the engine is gone, wind noise, road friction, gear meshing, and subtle structural rattles become highly exposed and irritating to the consumer. Acoustic cameras are increasingly vital for mapping these high-frequency, transient sounds, allowing automotive engineers to visualize complex noise profiles that traditional, single-microphone NVH tools simply cannot isolate.

Mechanical Inspection and Non-Destructive Testing (NDT) Paradigms

The absolute structural and mechanical integrity of industrial assets is continuously evaluated through a diverse array of Non-Destructive Testing (NDT) methodologies. These specialized techniques are scientifically designed to analyze the physical properties of materials, detect microscopic subsurface discontinuities, and assess structural fatigue without permanently altering, stressing, or destroying the test object. A comprehensive mechanical inspection program utilizes a sophisticated matrix of NDT methods, carefully tailored to the specific material properties, geometries, and predicted failure modes of the asset.

Visual and Surface Inspection Methods

Visual Testing (VT): Visual inspection is the oldest and remains the fundamental prerequisite of all NDT processes. Utilizing both the unassisted eye and optical magnification tools (such as borescopes or mirrors), VT identifies macroscopic surface corrosion, physical deformation, part misalignment, and obvious cracking. Despite its absolute ubiquity, VT is highly susceptible to human subjectivity, visual fatigue, and complacency. Operators performing repetitive, daily inspections often become dangerously desensitized to slow, incremental degradation, missing obvious faults simply because they are psychologically accustomed to the asset’s deteriorating appearance. Consequently, VT is increasingly augmented by robotic crawlers and aerial drones to ensure objective, close-proximity visual assessment of high-risk, difficult-to-reach assets. For example, drones equipped with specialized payloads allow technicians to comprehensively visually inspect 8-10 massive storage tanks in a single day, drastically reducing the need for dangerous scaffolding or rope access.

Liquid Penetrant Testing (PT): To reliably identify fine surface-breaking defects that easily evade visual detection, technicians employ PT. The chemical process involves applying a highly visible (often red) or fluorescent dye to the thoroughly cleaned, non-porous surface of the material. Capillary action naturally draws the liquid deep into microscopic cracks and pores. After carefully removing the excess surface penetrant, a contrasting developer powder is applied, which acts like a sponge, drawing the trapped dye back out to the surface to reveal a distinct, high-contrast visual indication of the underlying flaw. While highly cost-effective and sensitive for non-magnetic materials, PT is strictly limited to surface discontinuities and requires meticulous, time-consuming surface preparation to avoid false negatives.

Electromagnetic and Subsurface Inspection Methods

Magnetic Particle Inspection (MT): For ferromagnetic materials (such as iron and steel), MT provides a highly rapid and sensitive method for detecting both surface and shallow near-surface irregularities. The NDT technician induces a strong magnetic field within the test piece using a yoke or prods. If a discontinuity (such as a fatigue crack or seam) exists perpendicular to the magnetic lines of flux, the magnetic field is forced to “leak” out of the material and into the air. When fine iron particles (applied either as a dry powder or suspended in a wet fluorescent solution) are introduced to the surface, they are strongly attracted to these localized flux leakage fields, vividly clustering around the defect to form a visible indication. MT is extensively utilized in the heavy manufacturing and automotive sectors, as well as the oil and gas industry, for rapidly identifying stress corrosion cracking in critical pipelines and drilling equipment.

Eddy Current Testing (ET): Operating on the complex principles of electromagnetic induction, ET is utilized almost exclusively for inspecting conductive materials and advanced alloys. An alternating electrical current is passed through a specialized test coil, generating a rapidly oscillating magnetic field. When this active coil is brought near the conductive test material, the magnetic field induces a circular flow of electrons—known as eddy currents—within the object itself. Any physical flaws, variations in material thickness, or subtle changes in the material’s electrical conductivity disrupt the smooth flow of these eddy currents. By precisely monitoring changes in the test coil’s electrical impedance, technicians can detect minute, invisible cracks, measure non-conductive coating thickness, and even verify variations in metal heat treatments.

Volumetric and Acoustic Modalities

Ultrasonic Testing (UT): UT introduces high-frequency mechanical vibrations (sound waves) directly into the internal structure of a solid material. Operating typically in the pulse-echo mode, a piezoelectric transducer sends short, discrete bursts of high-frequency ultrasound through the medium. When the traveling sound wave encounters an acoustic impedance mismatch—such as the opposite back wall of the material or a hidden internal void, inclusion, or crack—a distinct portion of the acoustic energy is reflected back to the transducer. By mathematically analyzing the precise time-of-flight and the amplitude of the reflected signal, UT accurately characterizes the exact depth, spatial size, and orientation of deep volumetric defects that are completely invisible from the surface.

Acoustic Emission Testing (AE): Unlike UT, which actively transmits sound into a material, AE is an entirely passive monitoring technique. It involves attaching highly sensitive piezoelectric sensors directly to a structure to continuously “listen” for the transient elastic stress waves generated by the rapid release of strain energy within the material itself. When a material undergoes mechanical stress, localized yielding, or the active propagation of a micro-crack, it produces a distinct, high-frequency acoustic burst. AE is uniquely capable of detecting the active, real-time progression of a structural defect under physical load, providing unparalleled temporal diagnostic advantages. In massive rotating machinery, continuous AE monitoring provides the absolute earliest possible warning of tribological issues and severe lubrication breakdown, reliably catching incipient friction long before sufficient thermal energy is generated for thermographic detection, or sufficient mass unbalance occurs to trigger macroscopic vibration analysis alerts.

Certification, Standards, and Personnel Competency

The ultimate diagnostic efficacy of any PdM technology or NDT methodology is inexorably linked to the technical competency and cognitive ability of the human operator analyzing the data. An incorrectly configured ultrasonic analyzer, a misunderstood beamforming map, or a miscalibrated thermal emissivity setting can render data entirely useless or, catastrophically, lead to dangerous operational decisions. Consequently, the industrial maintenance sector mandates strict, uncompromising adherence to international qualification and certification frameworks.

Two primary bodies govern global NDT and condition monitoring certification: the American Society for Nondestructive Testing (ASNT) and the International Organization for Standardization (ISO).

ASNT Employer-Based and Central Certification Frameworks

In North America, the ASNT provides widely adopted guidelines primarily through the SNT-TC-1A and ANSI/ASNT CP-189 standards. These documents establish rigorous employer-based certification protocols for Level I, Level II, and Level III personnel across various distinct NDT methods. Because it is employer-based, the specific company is ultimately responsible for certifying that their employee meets the ASNT guidelines. For example, achieving a Level II certification in Thermal/Infrared Testing (TIR) under the CP-189 framework requires a minimum of of formal classroom training coupled with of documented, supervised practical experience in specific techniques such as electrical and mechanical testing. Similarly, Vibration Analysis requires of intensive training and of field experience to reach Level II. To address the need for globally portable credentials, ASNT also administers central certification programs through the ASNT 9712 schema, which aligns closely with ISO 9712 standards to ensure international recognition of technical competence independent of a specific employer.

ISO 18436 Standard Series

Internationally, the certification of condition monitoring and diagnostic personnel is strictly governed by the comprehensive ISO 18436 series. Unlike ASNT’s traditional employer-based model, the ISO 18436 paradigm emphasizes independent, third-party assessment through accredited certification bodies, ensuring a completely impartial evaluation of a candidate’s theoretical knowledge and practical competency.

The ISO 18436 series establishes a rigid, multi-category classification system (typically Categories I through IV for complex vibration analysis, and Categories I through III for other methods) precisely mapped to specific technology domains :

-

ISO 18436-2 (Vibration Analysis): This standard focuses exclusively on Vibration Condition Monitoring. A Category I analyst performs basic data acquisition on pre-determined plant routes, while Category III and IV analysts are expected to design complex, plant-wide monitoring programs, perform advanced operational deflection shape (ODS) analysis, execute transient machine diagnostics, and calculate structural resonance frequencies.

-

ISO 18436-6 (Acoustic Emission): Governs the highly specialized field of Acoustic Emission testing. Category II personnel are qualified to independently select appropriate hardware, comprehensively define application limitations, and analyze complex AE burst data to recommend corrective actions to plant management.

-

ISO 18436-7 (Thermography): Details the strict requirements for Thermography personnel. This standard ensures operators deeply understand thermal dynamics, thermodynamic heat transfer, emissivity correction, spatial resolution limitations, and the effects of environmental variables on thermal accuracy.

-

ISO 18436-8 (Ultrasound): Covers Ultrasound condition monitoring, dictating precise standards for compressed gas leak detection, steam trap inspection, and high-frequency bearing monitoring. Unique to this specific acoustic standard is the explicit requirement for regular audiometric examinations. To ensure the inspector can accurately interpret heterodyned (demodulated) ultrasonic audio signals, they must possess sufficient natural or corrected hearing acuity, demonstrating an average of hearing level or lower across standard pure tones.

Data Fusion, Digital Twins, and Centralized Dashboards

The true, transformative potential of Industry 4.0 and the rapidly emerging Industry 5.0 paradigm lies not in the continued isolation of these individual diagnostic technologies, but in their deep, synergistic integration. The modern industrial facility generates vast, incredibly disparate datasets simultaneously: thermal images indicating subtle heat gradients, high-frequency ultrasonic spectra denoting micro-leaks, fast Fourier transform (FFT) vibration plots highlighting structural resonances, and computer vision outputs continuously tracking analog gauge metrics. Processing these independent data streams in isolated silos severely limits predictive accuracy and obscures systemic failure patterns.

Multimodal Sensor Fusion and Advanced Neural Networks

Recent unprecedented advancements in artificial intelligence are driving deep data fusion architectures, unifying disparate condition monitoring data. Rather than relying solely on scalar measurements, systems are increasingly utilizing Vision Transformers (ViTs) to process high-level visual data simultaneously with mechanical operational metrics. By continuously feeding thermographic heat maps, acoustic emission localizations, and visual defect classifications into a unified neural network alongside time-series vibration data, these multimodal frameworks significantly enhance fault classification accuracy and reduce false positives.

For example, early damage detection in a high-speed rotating bearing can be vastly improved by combining the extreme high-frequency temporal sensitivity of Acoustic Emission testing with the low-frequency mass-unbalance detection inherent to traditional vibration analysis. Utilizing highly adaptive algorithms like Variational Mode Decomposition (VMD)—which mathematically decomposes a complex time-series signal into distinct intrinsic mode functions—optimized through metaheuristic techniques such as the Crested Porcupine Optimization (CPO) algorithm, an integrated system can isolate the specific frequencies associated with a microscopic bearing fault. When these decomposed acoustic-vibro signal components are fed into advanced architectures like a CNN-BiGRU-AT network, the system learns deep spatio-temporal dependencies, maintaining extreme accuracy even in exceptionally noisy, real-world industrial environments where single-modality sensors fail completely.

Industrial Spatial Digital Twins

This fused analytical data is ultimately contextualized spatially within an Industrial Spatial Digital Twin—a high-fidelity, real-time virtual replica of the physical manufacturing system and its operating environment. Digital twins explicitly solve the problem of spatial context in maintenance. Using ruggedized edge-computing hardware and intrinsically safe IoT sensors—such as magnetic MEMS accelerometers deployed directly onto hazardous machinery in explosive atmospheres—the physical asset’s state is continuously synchronized with its 3D digital counterpart.

Furthermore, these digital twins are increasingly populated with “virtual sensors” or soft sensors. These are complex mathematical or machine-learning models that infer highly specific physical measurements (e.g., internal thermodynamic stress or fluid dynamics) based on the combined, real-time inputs of external physical sensors. This provides critical insights into unreachable, highly pressurized, or radiologically hazardous areas of the plant without the need for delicate, intrusive physical probes. In infrastructure inspection, physical cameras and computer vision models are integrated directly into 3D digital twin models to generate photorealistic synthetic data. This synthetic data realistically simulates structural damage, spalling, and leakage under perfectly controlled lighting and camera angles, which is then used to augment training datasets for AI defect detection, elegantly bypassing the challenge of scarce real-world failure data.

Real-Time Centralized Dashboards and Visual Management

To make this highly complex, multi-dimensional data actionable for plant managers, reliability engineers, and financial stakeholders, the fused information is intelligently routed to centralized asset health monitoring dashboards. These sophisticated visual management tools are absolutely paramount for effective human-machine interaction. Intuitive UI/UX designs aggregate Key Performance Indicators (KPIs) across all sensor modalities, seamlessly translating complex algorithmic outputs into simple, visually balanced, color-coded health scores and equipment availability metrics.

By viewing a single, integrated interface, an engineer can instantly ascertain whether a critical chiller is exhibiting an elevated thermal , if an associated analog gauge is reading outside defined parameters via computer vision, and if an attached ultrasonic sensor is detecting an incipient compressed air leak. This continuous, automated visual oversight completely transforms industrial asset monitoring. It systematically transitions maintenance operations from a reactive, clipboard-driven, labor-intensive process reliant on subjective human observation to a proactive, highly optimized, data-driven operational strategy capable of predicting and preventing catastrophic failures long before they disrupt production.